Pagination and SEO: What you need to know in 2025

Table of Contents

Learn how search engines handle paginated content, plus tips to prevent indexing issues and boost crawl efficiency.

Ever wondered why some of your ecommerce products or blog posts never appear on Google?

The way your site handles pagination could be the reason.

This article explores the complexities of pagination – what it is, whether your site needs it for SEO, and how it affects search in 2025.

- What is pagination?

- Examples of pagination in action.

- Why is pagination important for SEO?

- Google’s deprecation of

rel=prev/next. - Why pagination is still important in 2025: The infinite scroll debate.

- How JavaScript can interfere with pagination.

- How to handle indexing and canonical tags for paginated URLs.

What is pagination?

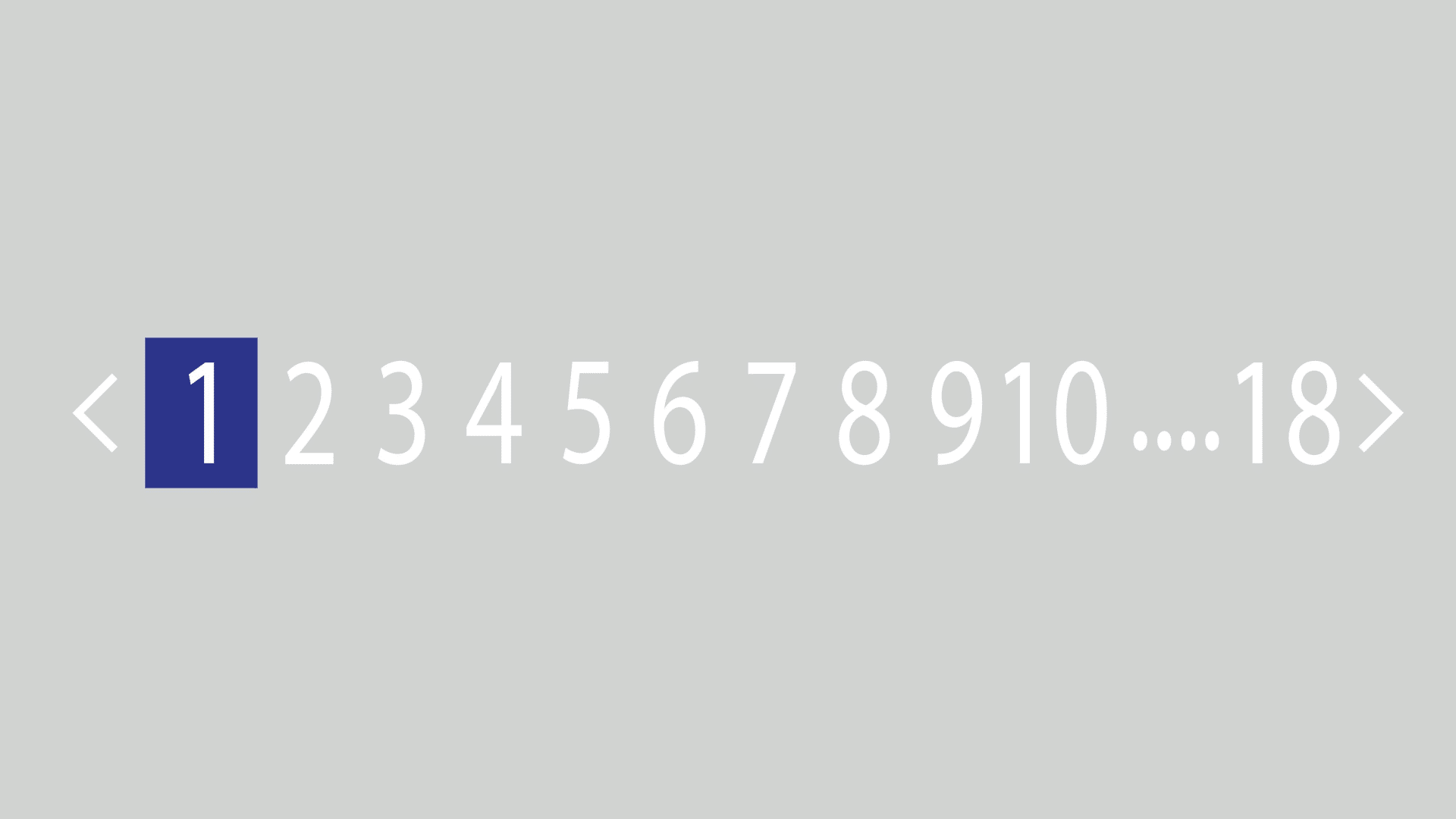

Pagination is the coding and technical framework on webpages that allows content to be divided across multiple pages while remaining thematically connected to the original parent page.

When a single page contains too much content to load efficiently, pagination helps by breaking it into smaller sections.

This improves user experience and unburdens the client (i.e., web browser) from loading too much information – much of which may not even be reviewed by the user.

Examples of pagination in action

Product listings

One common example of pagination is navigating multiple pages of product results within a single product feed or category.

Let’s look at Virgin Experience Days, a site that sells gifted experiences similar to Red Letter Days.

Take their Mother’s Day experiences page:

https://www.virginexperiencedays.co.uk/mothers-day-gifts

Scroll down to the “All Mother’s Day Experiences & Gift Ideas Experiences” section, and you’ll see a staggering 1,635 experiences to choose from.

That’s a lot.

Clearly, listing all of them on a single page wouldn’t be practical.

It would result in excessive vertical scrolling and could slow down page loading times.

Further down the page, you’ll find pagination links:

Clicking a pagination link moves users to separate product listing pages, such as page 2:

https://www.virginexperiencedays.co.uk/mothers-day-gifts?page=2

In the URL, ?page=2 appears as a parameter extension, a common pagination syntax.

Variations include ?p=2 or /page/2/, but the purpose remains the same – allowing users to browse additional pages of listings.

Even major retailers like Amazon use similar pagination structures.

Pagination also helps search engines discover deeply nested products.

If a site is so large that all its products can’t be listed in a single XML sitemap, pagination links provide an additional way for crawlers to access them.

Even when XML sitemaps are in place, internal linking remains important for SEO.

While pagination links aren’t the strongest ranking signal, they serve a foundational role in ensuring content is discoverable.

Dig deeper: Internal linking for ecommerce: The ultimate guide

Blog and news feeds

Pagination isn’t limited to product listings, it’s also widely used in blog and news feeds.

Take Search Engine Land’s SEO article archive:

https://searchengineland.com/library/seo

In this page, you can access a feed of all SEO-related posts on Search Engine Land.

Scrolling down, you’ll find pagination links.

Clicking “2” takes you to the next set of SEO articles:

https://searchengineland.com/library/seo/page/2

Pagination inside content

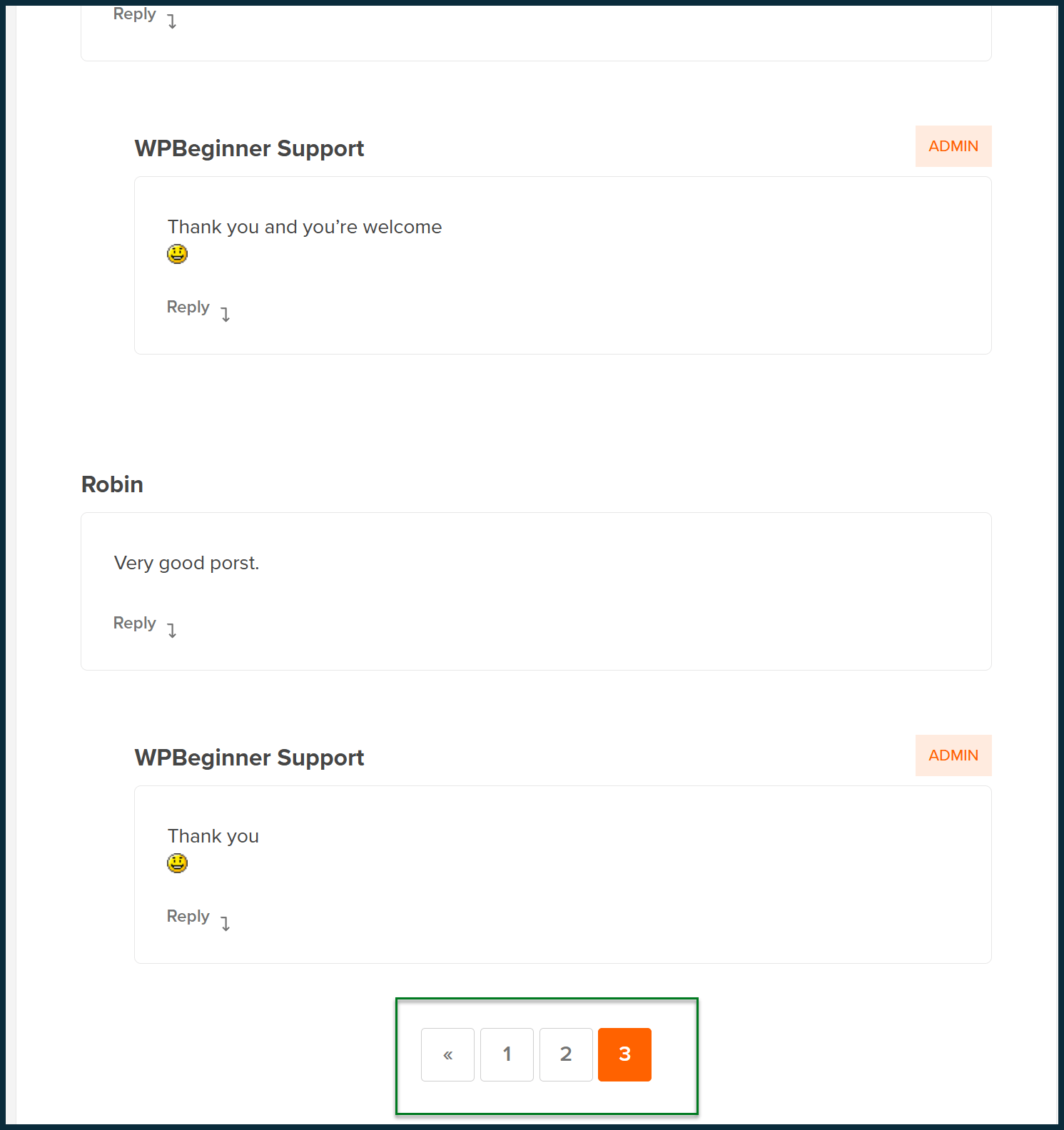

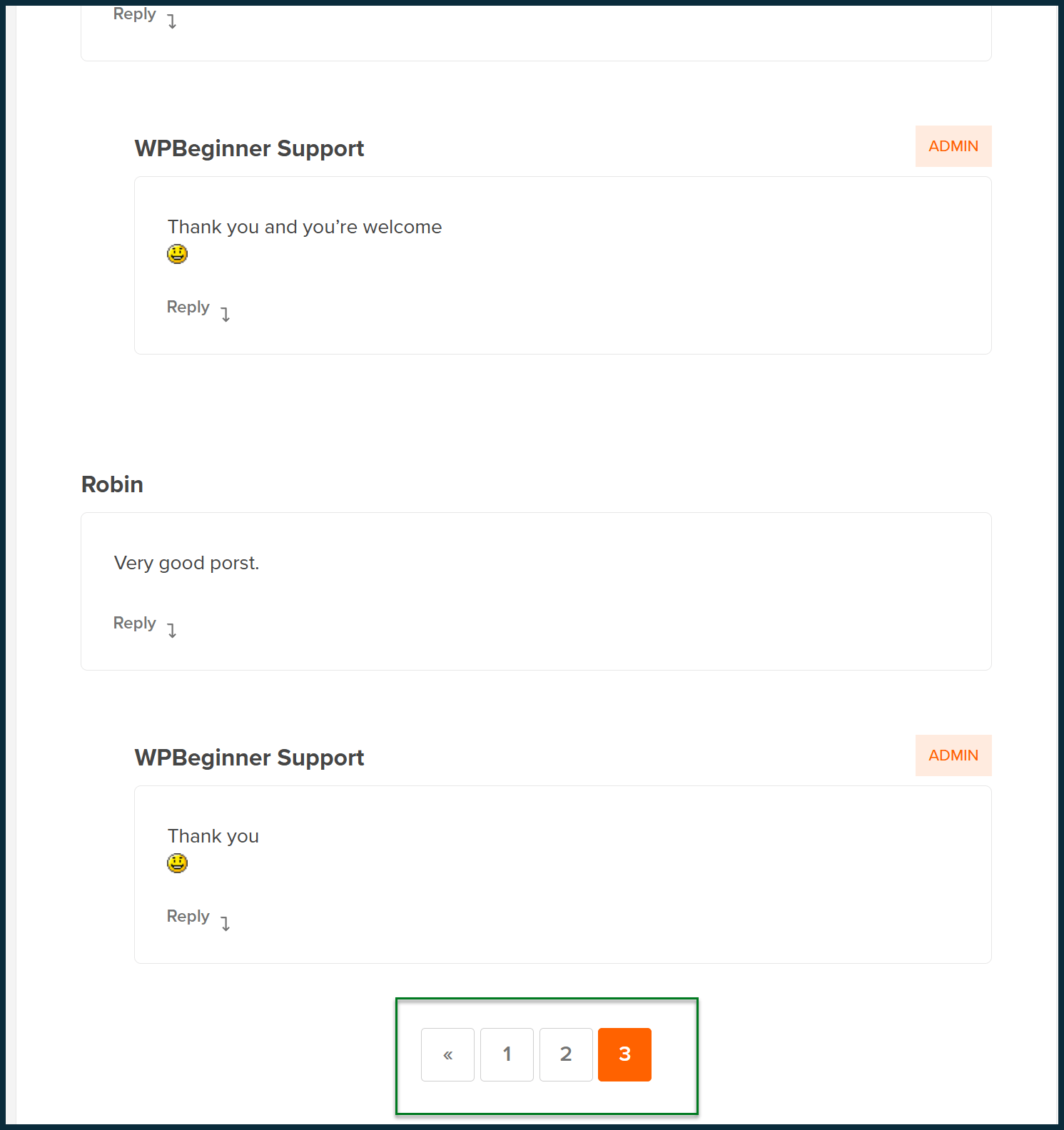

Pagination can also exist within individual pieces of content rather than at a feed level.

For example, some news websites paginate comment sections when a single article receives thousands of comments.

Similarly, forum threads with extensive discussions often use pagination to break up replies across multiple pages.

Consider this post from WPBeginner:

https://www.wpbeginner.com/beginners-guide/how-to-choose-the-best-blogging-platform/

Scroll to the bottom, and you’ll see that even the comment section uses pagination to organize user responses.

Why is pagination important for SEO?

Pagination plays a crucial role in SEO for several reasons:

Indexing

Without pagination, search crawlers may struggle to find deeply nested content such as blog posts, news articles, products, and comments.

Crawl efficiency

Pagination increases the number of URLs on a site, which might seem counterproductive to efficient crawling.

However, most search engines recognize common pagination structures – even without rich markup.

This understanding allows them to prioritize crawling more valuable content while ignoring less important paginated pages.

Internal linking

Pagination also contributes to internal linking.

While pagination links don’t carry significant link authority, they provide structure.

Google tends to pay less attention to orphaned pages – those without inbound links – so pagination can help ensure content remains connected.

Managing content duplication

If URLs aren’t structured properly, search engines may mistakenly identify them as duplicate content.

Pagination isn’t as strong a signal for content consolidation as redirects or canonical tags.

Still, when implemented correctly, it helps search engines differentiate between paginated pages and true duplicates.

Google’s deprecation of rel=prev/next

Google previously supported rel=prev/next for declaring paginated content.

However, in March 2019, it was revealed that Google had not used this markup for some time.

As a result, these tags are no longer necessary in a website’s code.

Google likely used rel=prev/next to study common pagination structures.

Over time, those insights were integrated into its core algorithms, making the markup redundant.

Some SEOs believe these tags may still help with crawling, but there is little evidence to support this.

If your site doesn’t use this markup, there’s no need to worry. Google can still recognize paginated URLs.

If your site uses it, there’s also no urgent need to remove it, as it won’t negatively impact your SEO.

Why pagination is still important in 2025: The infinite scroll debate

Alternate methods for browsing large amounts of content have emerged over the past couple of decades.

“View more” or “Load more” buttons often appear under comment streams, while infinite scroll or lazy-loaded feeds are common for posts and products.

Some argue these features are more user-friendly.

Originally pioneered by social networks such as Twitter (now X), this form of navigation helped boost social interactions.

Some websites have adopted it, but why isn’t it more widespread?

From an SEO perspective, the issue is that search engine crawlers interact with webpages in a limited way.

While headless browsers may sometimes execute JavaScript-based content during a page load, search crawlers typically don’t “scroll down” to trigger new content.

A search engine bot certainly won’t scroll indefinitely to load everything.

As a result, websites relying solely on infinite scroll or lazy loading risk orphaning articles, products, and comments over time.

For major news brands with strong SEO authority and extensive XML sitemaps, this may not be a concern.

The trade-off between SEO and user experience may be acceptable.

But for most websites, implementing these technologies is likely a bad idea.

Search crawlers may not spend time scrolling through content feeds, but they will click hyperlinks – including pagination links.

How JavaScript can interfere with pagination

Even if your site doesn’t use infinite scroll plugins, JavaScript can still interfere with pagination.

Since July 2024, Google has at least attempted to render JavaScript for all visited pages.

However, details on this remain vague.

- Does Google render all pages, including JavaScript, at the time of the crawl?

- Or is execution deferred to a separate processing queue?

- How does this affect Google’s ranking algorithms?

- Does Google make initial determinations before executing JavaScript weeks later?

There are no definitive answers to these questions.

What we do know is that “dynamic rendering is on the decline,” according to the 2024 Web Almanac SEO Chapter.

If Google’s effort to execute JavaScript for all crawled pages is progressing well – which seems unlikely given the potential efficiency drawbacks – why are so many sites reverting to a non-dynamic state?

This doesn’t mean JavaScript use is disappearing.

Instead, more sites may be shifting to server-side or edge-side rendering.

If your site uses traditional pagination but JavaScript interferes with pagination links, it can still lead to crawling issues.

For example, your site might use traditional pagination links, but the main content of your page is lazy-loaded.

In turn, the pagination links only appear when a user (or bot) scrolls the page.

Dig deeper: A guide to diagnosing common JavaScript SEO issues

How to handle indexing and canonical tags for paginated URLs

SEO professionals often recommend using canonical tags to point paginated URLs to their parent pages, marking them as non-canonical.

This practice was especially common before Google introduced rel=prev/next.

Since Google deprecated rel=prev/next, many SEOs remain uncertain about the best way to handle pagination URLs.

Avoid blocking paginated content via robots.txt or with canonical tags.

Doing so prevents Google from crawling or indexing those pages.

In the case of news posts, certain comment exchanges might be considered valuable by Google, potentially connecting a paginated version of an article with keywords that wouldn’t otherwise be associated with it.

This can generate free traffic – something worth keeping in 2025.

Similarly, restricting the crawling and indexing of paginated product feeds could leave some products effectively soft-orphaned.

In SEO, there’s a tendency to chase perfection and aim for complete crawl control.

But being overly aggressive here can do more harm than good, so tread carefully.

There are cases where it makes sense to de-canonicalize or limit the crawling of paginated URLs.

Before taking that step, make sure you have data showing that crawl-efficiency issues outweigh the potential free traffic gains.

If you don’t have that data, don’t block the URLs. Simple!

If you liked the article, do not forget to share it with your friends. Follow us on Google News too, click on the star and choose us from your favorites.

If you want to read more like this article, you can visit our Technology category.